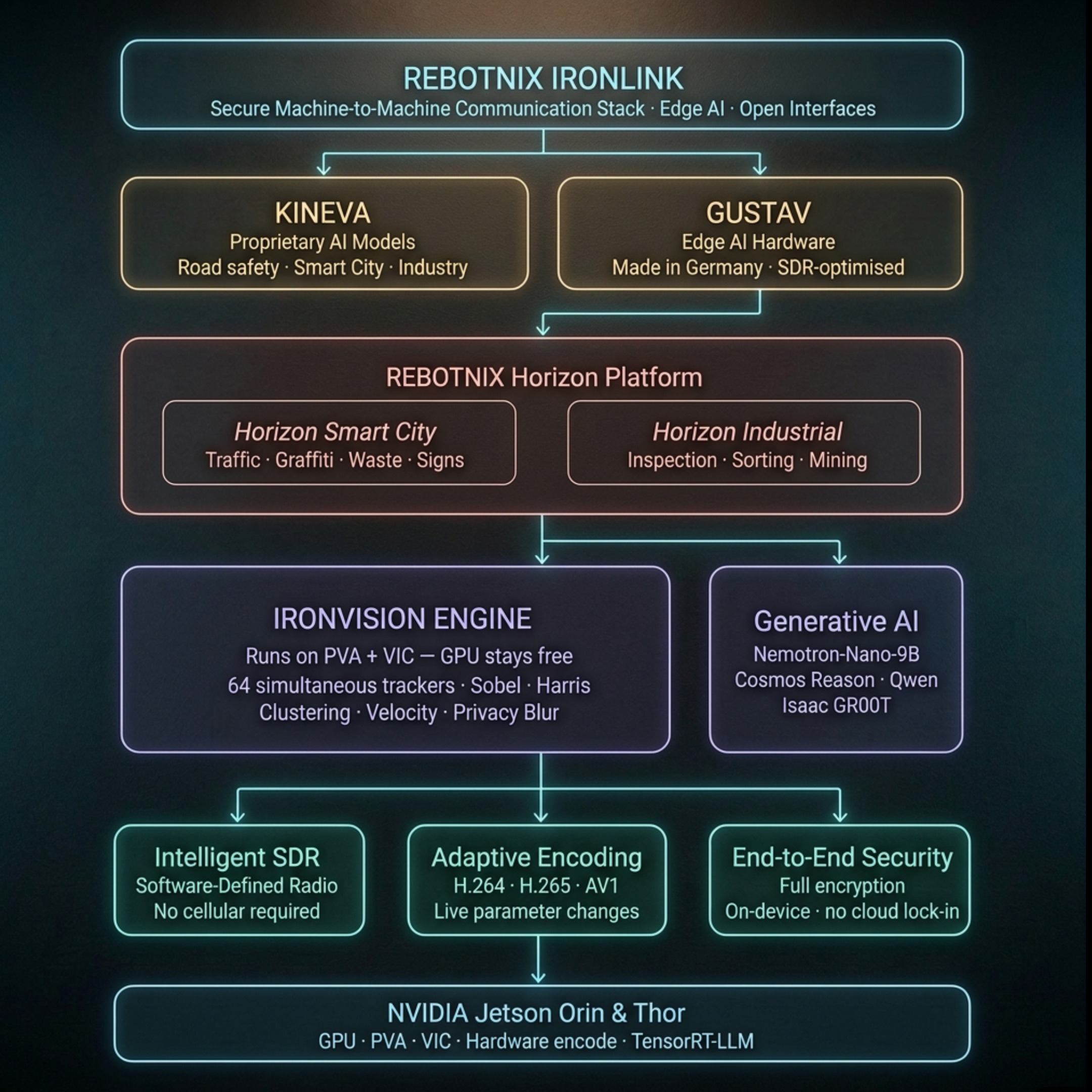

Six engines. One protocol.

IronLink unifies every compute layer a physical machine needs into a single, deterministic protocol running entirely at the edge.

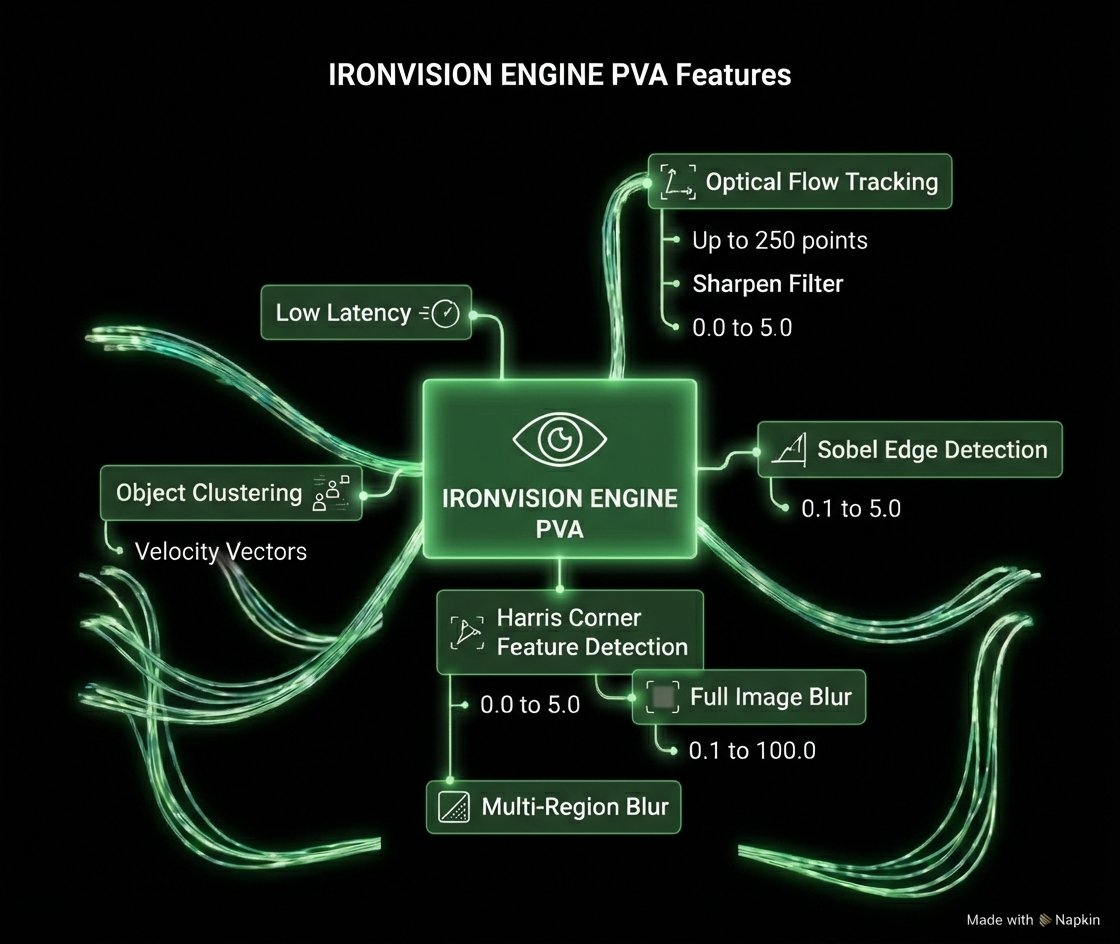

IRONVISION Engine.

Real-time computer vision running directly on NVIDIA Jetson PVA and VIC hardware accelerators. 64 simultaneous object trackers operating independently of the GPU, leaving full CUDA capacity free for AI inference and other workloads.

Generative AI at the Edge.

Run large language models and vision-language models locally via TensorRT-LLM. Full support for KINEVA VLLM and LLM models. No cloud round-trips, no data leaving the device, no latency penalties.

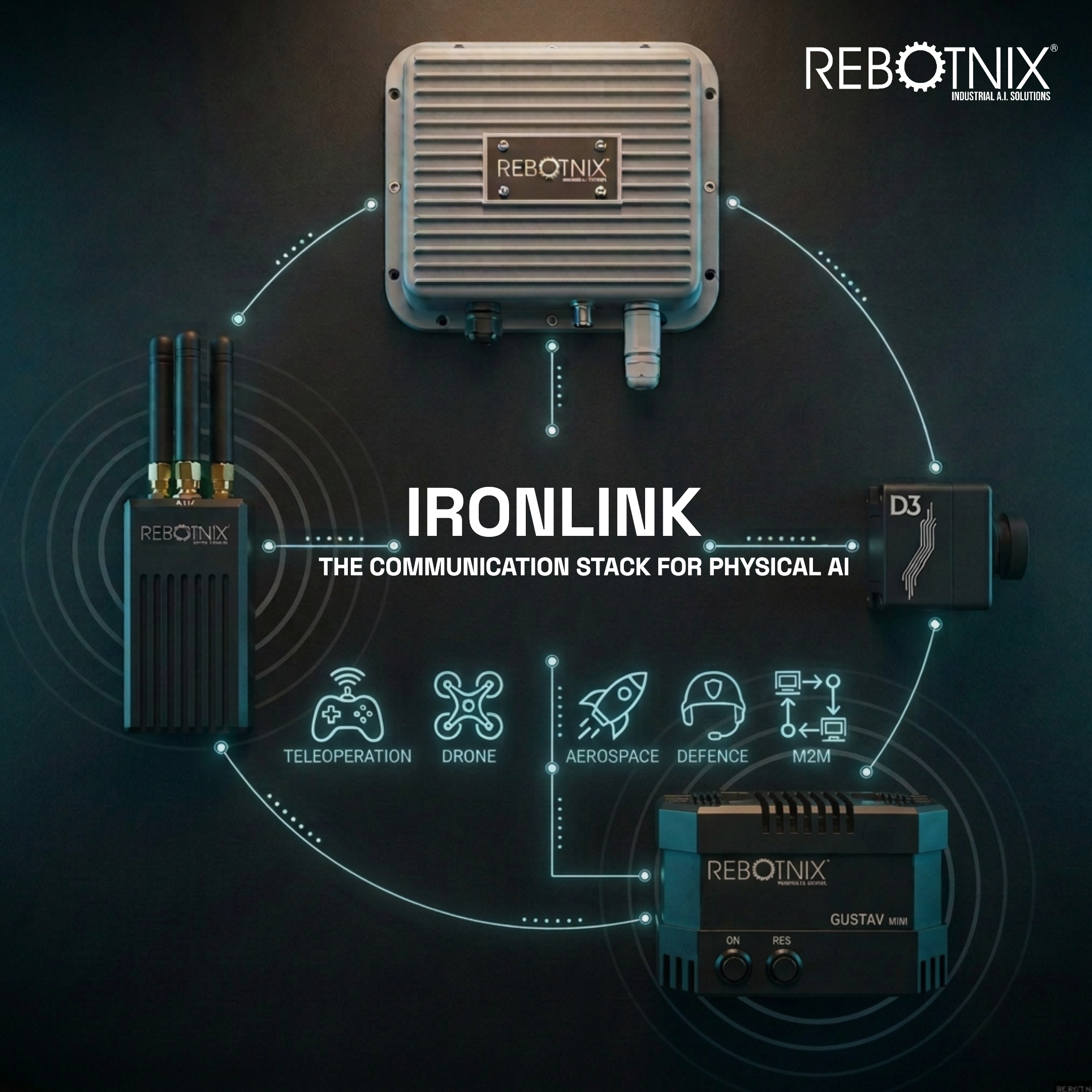

Intelligent SDR.

Software-Defined Radio for mesh communication between machines. Dynamic frequency hopping, end-to-end encryption, and autonomous link management. No cellular infrastructure, no SIM cards, no subscriptions.

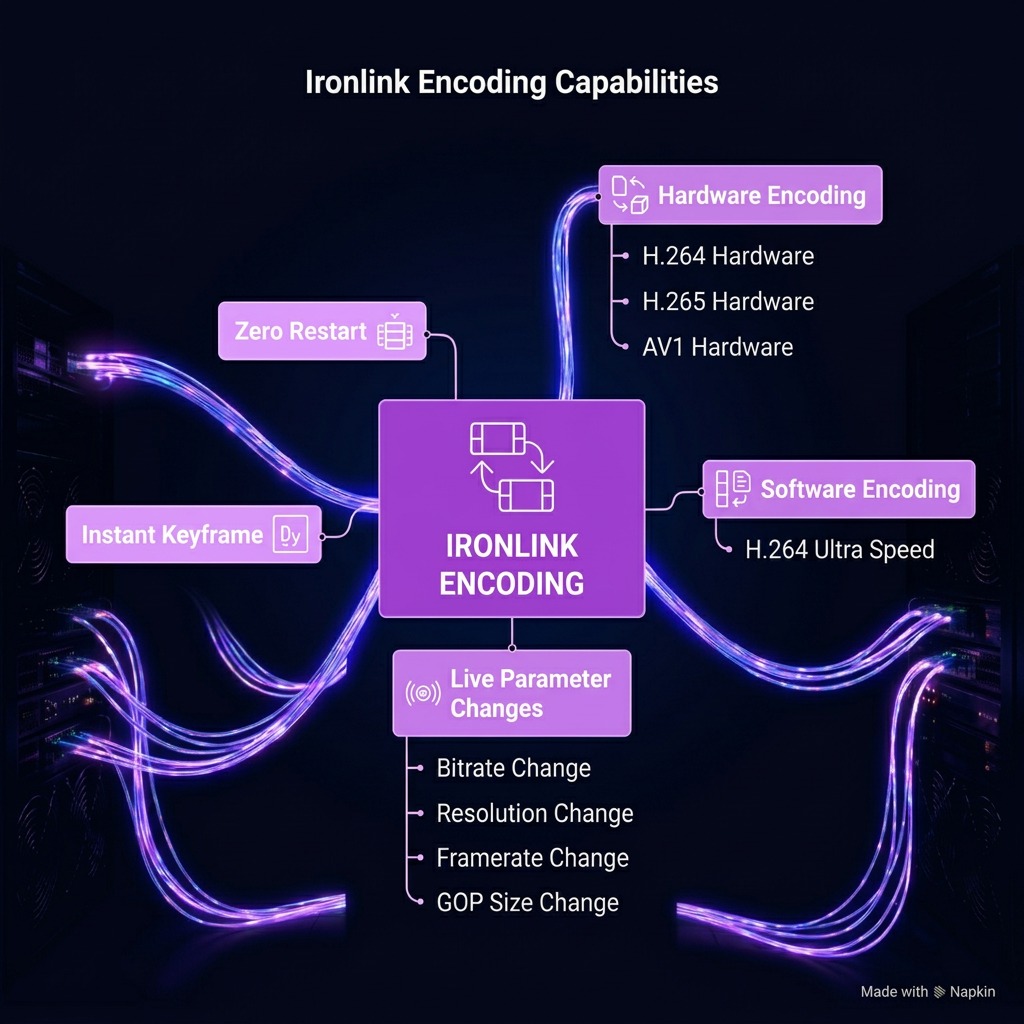

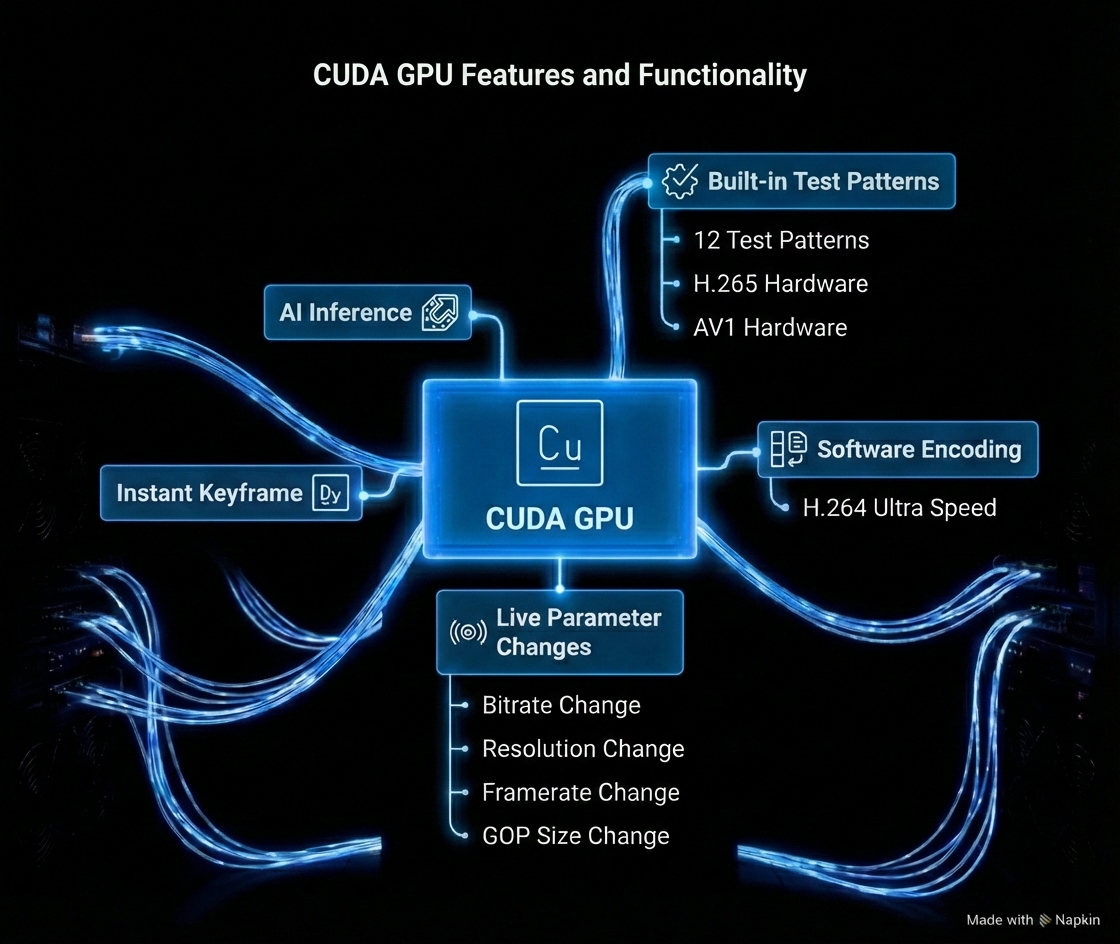

Adaptive Video Encoding.

Hardware-accelerated H.264, H.265, and AV1 encoding with live parameter adjustment. Bitrate, resolution, and codec can change on the fly based on network conditions or mission requirements. Zero CPU overhead.

End-to-End Security.

Full encryption of all data on-device before it leaves the processor. Confidential computing ensures keys never exist in cleartext outside the secure enclave. No cloud dependency, no trust delegation.

Built for NVIDIA Jetson.

Optimized from the ground up for NVIDIA Jetson Orin NX and AGX Thor. Every engine leverages dedicated hardware accelerators. No emulation, no abstraction layers, no wasted silicon.